The data intelligence gap nobody talks about

Your organization has spent months, perhaps years, building a robust cloud data warehouse. Snowflake, Amazon Redshift, Google BigQuery, or Databricks stores clean, transformed, and trusted data at your fingertips. Analysts write SQL queries that surface remarkable insights. Dashboards load in seconds.

And yet, the sales representative still logs into Salesforce every morning to a CRM that has no idea which customers are at risk of churning. The marketing team still exports CSVs to update email segments. The customer success team has no visibility into real-time product usage scores when they open a support ticket.

This is the data intelligence gap: the chasm between the insights your warehouse holds and the operational tools your teams actually use every day. Reverse ETL exists specifically to close this gap.

This guide is for business leaders and data decision makers who are evaluating reverse ETL tools, trying to understand what enterprise-grade reverse ETL software actually means, and looking for a framework to choose the right reverse ETL platform for their cloud data warehouse stack.

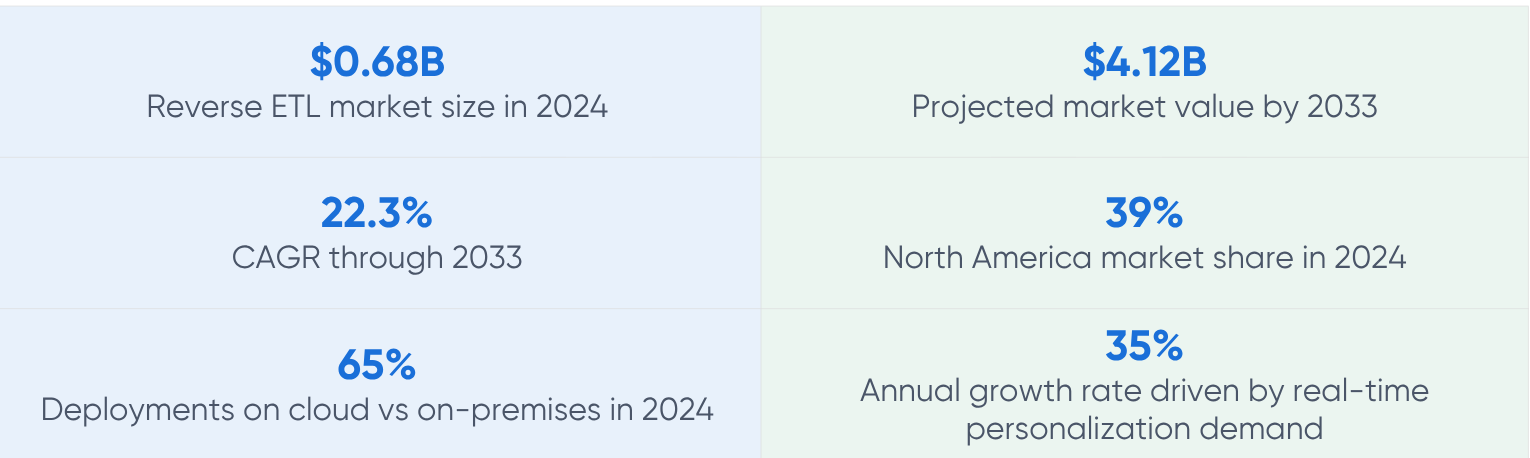

The market is moving fast and the numbers prove it

Source: MarketIntelo, Reverse ETL Market Research Report 2033

Source: Improvado, 12 Best Reverse ETL Tools for 2026

The broader ETL market context is equally telling. According to Mordor Intelligence, the ETL market is set to grow from $8.85 billion in 2025 to $21.25 billion by 2031 at a 15.72% CAGR, with a May 2025 milestone that says a great deal about where things are headed.

Source: Mordor Intelligence, Extract Transform and Load Market

What is reverse ETL and why does it matter for enterprises

Traditional ETL (Extract, Transform, Load) moves data from source systems into a central data warehouse. The flow is one-directional: source systems feed your warehouse, your warehouse feeds your analytics.

Reverse ETL flips this. It takes the modeled, enriched, and trusted data sitting in your warehouse and pushes it back out to the operational tools your teams use to run the business: CRM systems, marketing automation platforms, customer success tools, advertising platforms, product analytics tools, and more.

A simple way to think about it

ETL: Your CRM, ERP, and web events flow INTO Snowflake or Redshift.

Reverse ETL: Snowflake or Redshift pushes enriched customer scores, predicted churn probabilities, and segment memberships back INTO Salesforce, HubSpot, or Marketo.

The result: business teams work from warehouse-quality data without ever logging into a SQL editor.

What reverse ETL solves that traditional BI cannot

Business intelligence tools solve the visibility problem. Reverse ETL solves the activation problem. There is a meaningful difference between the two:

- BI tools help executives and analysts see what is happening in dashboards and reports.

- Reverse ETL tools help frontline teams act on that intelligence inside the tools they already operate in.

- A churn score in a Tableau dashboard is informational. That same churn score surfaced inside a customer success representative's Gainsight account is operational.

The problem with manual workarounds

Before reverse ETL platforms became mainstream, data teams used workarounds: scheduled CSV exports, Zapier automations, or hand-written scripts that pushed data from the warehouse to operational tools. These approaches have real costs:

- Engineering debt: every custom script is a fragile pipeline that breaks when APIs change or schemas evolve.

- Data latency: CSV exports are batch operations. By the time a sales rep uses yesterday's export, the data is already stale.

- Governance risk: untracked data movement creates compliance nightmares under GDPR, HIPAA, and SOC 2 frameworks.

- Scalability ceiling: manual processes cannot scale to serve dozens of teams and hundreds of use cases.

How reverse ETL works inside a cloud data warehouse stack

Understanding the mechanics helps business leaders ask the right questions when evaluating reverse ETL software. The process follows a consistent pattern across all major platforms:

Step 1: Connect to your warehouse

The reverse ETL platform connects to your cloud data warehouse (Snowflake, Redshift, BigQuery, Databricks, or similar) using a read-only connection. This is the data source.

Step 2: Define the data model

Data teams write SQL queries or use the platform's visual builder to define which data should be synced. This might be a segment of at-risk customers based on product usage data, or a table of accounts enriched with predictive health scores.

Step 3: Map to destinations

The platform maps warehouse columns to fields in the destination application. Customer ID in Redshift maps to Account ID in Salesforce. Churn score in BigQuery maps to a custom field in Gainsight.

Step 4: Sync on a schedule or in real time

Syncs can run on a defined schedule (hourly, daily) or be triggered in real time by warehouse events. Enterprise-grade reverse ETL platforms support both modes with observable pipeline health, alerting, and audit logging.

Step 5: Monitor and govern

Production-grade deployments require observability. Business leaders should expect sync logs, failure notifications, data lineage tracking, and role-based access controls as baseline requirements, not optional add-ons.

High-value use cases for enterprise reverse ETL

The following use cases represent where enterprise teams are seeing the most measurable business impact from reverse ETL platforms today.

Revenue operations and CRM enrichment

Sales teams make decisions based on the data in their CRM. When that data is enriched with product usage, purchase history, support ticket patterns, and predictive scores from the warehouse, conversion rates improve significantly. Industry benchmarks report a 25 to 45 percent increase in lead conversion rates for sales organizations using reverse ETL, driven by access to enriched CRM data including product usage signals, engagement scores, and predictive qualification insights.

Source: Integrate.io, Reverse ETL Usage Statistics 2026

Marketing audience activation

Warehouse-quality audience segments, built with complete behavioral data, out-perform segments created inside marketing tools that only see their own touchpoints. Reverse ETL enables marketing teams to sync precise customer segments to Google Ads, Meta, LinkedIn, HubSpot, and Marketo without manual exports. Companies using reverse ETL for audience synchronization report a 25 to 40 percent improvement in return on ad spend through real-time audience updates.

Source: Integrate.io, Reverse ETL Usage Statistics 2026

Customer success and churn prevention

Customer success teams operating from incomplete data miss the early signals of churn. When product usage data, support ticket velocity, and contract renewal timelines from the warehouse flow directly into CS platforms like Gainsight or Totango, teams intervene earlier and more effectively.

Personalization and product-led growth

For product-led growth companies, syncing warehouse behavioral data back to user-facing tools enables hyper-personalized in-app experiences. One reported outcome for PLG companies using reverse ETL was a 34 percent monthly recurring revenue growth over 12 months, coupled with a 25 percent improvement in free-to-paid activation rates.

Source: Contrary Research via Integrate.io

Financial services and risk management

Banks and insurers use reverse ETL to push risk scores and compliance flags from their data warehouse into underwriting systems and fraud detection platforms, allowing frontline analysts to act on warehouse intelligence without building separate API integrations for each system.

What enterprise-grade reverse ETL actually means

The term enterprise-grade is overused in software marketing. For reverse ETL platforms specifically, it translates into a concrete set of capabilities that matter when you are running data pipelines in regulated industries or at scale. Business leaders should evaluate vendors against these criteria.

Data governance and compliance

Enterprise reverse ETL software must support role-based access controls (RBAC) to determine who can create, edit, or trigger syncs. It must provide data lineage tracking to answer audit questions about where data came from and where it went. For organizations operating under GDPR, HIPAA, or SOC 2, the platform should support PII masking, consent-based sync filtering, and audit log exports.

Observability and reliability

Broken syncs are not just an inconvenience. When a sales team's CRM is populated with stale churn scores because a sync silently failed at 2 AM, business decisions are made on bad data. Enterprise platforms must provide real-time sync monitoring, failure alerting via Slack or PagerDuty, and the ability to write sync logs back to the warehouse for historical audit trails.

Scale and performance

Enterprise use cases frequently involve syncing millions of records across dozens of destinations. The platform must handle incremental syncs efficiently, supporting change data capture (CDC) patterns that only move updated records rather than performing full-table refreshes. Platforms should also support parallel sync execution for high-volume use cases without degrading warehouse query performance.

Warehouse-first architecture

Genuine enterprise-grade reverse ETL platforms treat the data warehouse as the single source of truth. They do not introduce their own data storage layer or require data to leave the warehouse environment before being transformed. All logic, modeling, and transformation should happen inside the warehouse using SQL, dbt models, or the warehouse's native compute.

Security and deployment model

For organizations in regulated industries, the deployment model is not trivial. A reverse ETL platform that stores credentials, caches data, or routes traffic through its own infrastructure introduces security and compliance risk. The gold standard for enterprise deployments is a model where data movement is orchestrated by the platform but data itself never leaves the customer's own cloud environment. This is directly analogous to what LakeStack delivers for cloud data foundations on AWS: infrastructure that runs inside the customer's own AWS account, ensuring 100 percent data control with no data leaving the environment.

The enterprise buyer's checklist

Supported warehouses: Does it connect natively to your warehouse (Snowflake, Redshift, BigQuery, Databricks)?

Destination coverage: Does it cover your core operational tools and have a credible roadmap for the rest?

Security model: Does data route through the vendor's infrastructure, or stay in your environment?

Compliance: RBAC, audit logs, data lineage, PII masking available out of the box?

Observability: Real-time sync health, failure alerting, and sync-back-to-warehouse logging?

Pricing predictability: Do costs scale linearly with data volume, or does pricing become opaque at scale?

Support model: Is there a named CSM and contractual SLA, or just a community forum?

Reverse ETL in the context of the modern data stack

Reverse ETL does not operate in isolation. It is a layer within the broader modern data stack, and understanding its position helps leaders make better architectural decisions.

The modern data stack in 2026 broadly looks like this: data from SaaS applications, databases, and event streams is ingested into a cloud data warehouse using ELT tools. Inside the warehouse, data engineers and analytics engineers transform and model that data using tools like dbt. Business intelligence tools like Looker, Tableau, or Amazon QuickSight sit on top of the warehouse to serve reporting and exploration.

Reverse ETL completes the loop. Where BI tools serve the analytics and exploration layer, reverse ETL serves the operational activation layer. They are complementary, not competing.

For organizations running cloud data infrastructure on AWS, this architecture has particular coherence. Platforms like LakeStack that deploy inside a customer's own AWS account using services such as AWS Glue, Amazon Redshift, Amazon Athena, and AWS Lake Formation already establish the governed, AI-ready data foundation that reverse ETL tools need as their source of truth. The path from raw data to operational activation becomes more reliable when both the data foundation and the activation layer share the same governance and lineage framework.

Related reading on LakeStack

Understanding how a governed data foundation supports downstream operational use cases like reverse ETL is covered in depth on the LakeStack resources page. The LakeStack platform by Applify builds this foundation inside a customer's AWS environment in under four weeks, giving data teams the trusted, modeled data that reverse ETL tools require to function reliably at enterprise scale.

A practical decision framework for business leaders

Choosing a reverse ETL platform is not primarily a technical decision. It is a business strategy decision with technical implications. The following framework helps business leaders structure their evaluation.

Start with use cases, not features

The single biggest mistake in reverse ETL evaluations is starting with a feature matrix comparison. Start instead with a prioritized list of three to five specific use cases: which team, which tool, which data, and what business outcome. A company whose primary use case is CRM enrichment for a sales team of 200 people will make a very different choice than one whose primary use case is syncing audience segments to Meta and Google for a programmatic advertising operation.

Assess your data maturity

Reverse ETL tools assume your warehouse data is clean, modeled, and trusted. If your warehouse is a landing zone of raw, unmodeled data, the first investment should be in data transformation and governance infrastructure, not reverse ETL. Platforms like Census explicitly document this assumption: they require data engineering work upstream before delivering value downstream.

Evaluate total cost of ownership

Published pricing for reverse ETL platforms is a starting point, not the full picture. As data volume grows, usage-based pricing models (per rows synced, per monthly active rows, per destination field) can escalate quickly. Request scenario modeling from vendors for your expected data volumes at 1x, 3x, and 10x current scale. Factor in the engineering time required to build and maintain models, the cost of any required professional services, and the cost of any adjacent tooling the platform requires.

Pilot with a high-impact, low-risk use case

Rather than running a parallel evaluation across multiple tools for a complex use case, identify a single high-value, low-risk use case for a controlled pilot. CRM enrichment with churn scores is a classic starting point: the business impact is measurable, the data model is relatively simple, and the sync to Salesforce or HubSpot is well-supported by every major platform.

Plan for organizational change

Reverse ETL changes who owns data activation. Data teams move from being order-takers who build one-off integrations to platform owners who enable self-service activation. Marketing and sales teams gain access to warehouse-quality data without needing to file Jira tickets. This shift requires organizational clarity on ownership, governance, and process, not just tooling.

Industry trends shaping reverse ETL in 2026 and beyond

AI and warehouse-native intelligence

The emergence of AI-powered features inside data warehouses is changing reverse ETL's value proposition. As warehouses like Snowflake Cortex, BigQuery ML, and Amazon Bedrock integration allow predictive models to run inside the warehouse itself, reverse ETL becomes the delivery mechanism that pushes AI-generated scores and recommendations directly into operational tools. The warehouse-as-AI-platform trend makes reverse ETL more strategically important, not less.

Composable CDP architecture

Customer Data Platforms (CDPs) traditionally stored their own copy of customer data. The composable CDP architecture treats the data warehouse as the customer data store and reverse ETL as the activation layer that feeds traditional CDP destinations. Hightouch's Composable CDP product is the clearest commercial expression of this shift. It means organizations can retire expensive standalone CDP licenses while achieving the same activation outcomes on top of warehouse infrastructure they already own.

Real-time and streaming requirements

Enterprise buyers increasingly require real-time or near-real-time sync capabilities, not just scheduled batch syncs. This is driving investment in Change Data Capture (CDC) streaming within reverse ETL platforms, allowing warehouse updates to trigger destination syncs within seconds rather than hours.

Data activation governance

As reverse ETL becomes mainstream, enterprises are recognizing the governance risk of uncontrolled data activation: wrong data flowing into the wrong destination at the wrong time. Leading vendors are investing in activation governance features including sync testing environments, data quality assertions before syncs execute, and approval workflows for high-stakes syncs that touch financial or regulated data.

Getting the data foundation right before activation

Reverse ETL tools are only as reliable as the data they pull from. This is a point that is easy to overlook in the excitement of evaluating shiny activation platforms, and it is the single most common reason reverse ETL implementations underdeliver.

If warehouse data is inconsistently modeled, lacks lineage, or is not governed with clear ownership, reverse ETL syncs will push bad data downstream at scale, faster than any manual process could have. The problem does not get smaller when automation is applied to it.

This is the architectural reality that makes a governed, AI-ready data foundation a prerequisite, not a parallel investment, to reverse ETL activation. Organizations that deploy reverse ETL on top of a well-governed lakehouse architecture, one where data is unified, cleansed, and tagged with clear lineage inside their own cloud environment, see dramatically higher success rates in production.

For teams building or modernizing their data foundation on AWS, exploring how LakeStack deploys a governed, AWS-native data layer in under four weeks (without custom engineering or infrastructure debt) provides important context for what a reliable reverse ETL source of truth looks like in practice.

Further reading for enterprise data leaders

The LakeStack blog publishes operational guidance for enterprise data leaders evaluating cloud data architecture decisions. Relevant reads include the manufacturing data lake guide and the healthcare data lake strategy piece, both of which address the governance and data readiness considerations that directly affect the reliability of downstream activation like reverse ETL.

Closing thoughts for decision makers

Reverse ETL is not a niche tool for data engineers. It is an enterprise capability that determines whether the intelligence your data warehouse holds actually reaches the teams who need it to do their jobs.

The market has validated this. A category that was valued at $0.68 billion in 2024 is projected to reach $4.12 billion by 2033 at a 22.3 percent CAGR, driven by an enterprise recognition that one-directional data pipelines are not enough. Cloud adoption, real-time personalization demands, and the rise of AI-ready data stacks are structural tailwinds that will continue to accelerate investment.

The leaders who make the right decisions now will be those who start with a clear use case, invest in data foundation quality before activation, choose platforms with credible enterprise governance capabilities, and build organizational models that empower business teams to use warehouse intelligence without depending entirely on data engineering queues.

The warehouse is not a destination. It is a launchpad. Reverse ETL is what makes it one.

Key takeaways

- Reverse ETL moves warehouse data into operational tools like CRM, marketing automation, and CS platforms, closing the gap between analytics and action.

- The global reverse ETL market is growing at 22.3% CAGR, projected to reach $4.12B by 2033.

- Enterprise-grade means: warehouse-first architecture, in-environment data movement, RBAC, audit logging, lineage, CDC streaming, and contractual SLA.

- Data foundation quality is a prerequisite. Reverse ETL amplifies whatever data quality exists in the warehouse, good or bad.

- Start with a specific, high-value use case and a controlled pilot before committing to a platform at scale.

Sources and citations

Source: MarketIntelo, Reverse ETL Market Research Report 2033 (August 2025)

Source: Mordor Intelligence, Extract Transform and Load (ETL) Market (January 2026)

Source: Integrate.io, Reverse ETL Usage Statistics 2026 (January 2026)

Source: Integrate.io, ETL Tools Market Size Statistics 2024-2025 (January 2026)

Source: Improvado, 12 Best Reverse ETL Tools for 2026 (April 2026)

Source: Hightouch, 10 Best Reverse ETL Tools (December 2025)

Source: Polytomic, Hightouch vs Fivetran comparison (2025)

Source: LakeStack by Applify, AWS-native data foundation accelerator

Source: Applify Blog, Manufacturing Data Lake (December 2025)

.png)

.png)